TNNLS 2022

Federated Learning via Plurality Vote

Kai Yue, Richeng Jin, Chau-Wai Wong, and Huaiyu Dai

NC State University

Abstract

Federated learning allows collaborative clients to solve a machine learning problem while preserving data privacy. Recent studies have tackled various challenges in federated learning, but the joint optimization of communication overhead, learning reliability, and deployment efficiency is still an open problem. To this end, we propose a new scheme named federated learning via plurality vote~(FedVote). In each communication round of FedVote, clients transmit binary or ternary weights to the server with low communication overhead. The model parameters are aggregated via weighted voting to enhance the resilience against Byzantine attacks. When deployed for inference, the model with binary or ternary weights is resource-friendly to edge devices. Our results demonstrate that the proposed method can reduce quantization error and converges faster compared to the methods directly quantizing the model updates.

Fig 1. Schematic of FedVote. One round of FedVote is composed of four steps. Each client first updates the local model and then sends the quantized weight to the server. Later, the server calculates the voting statistics and sends back the soft voting results to each client.

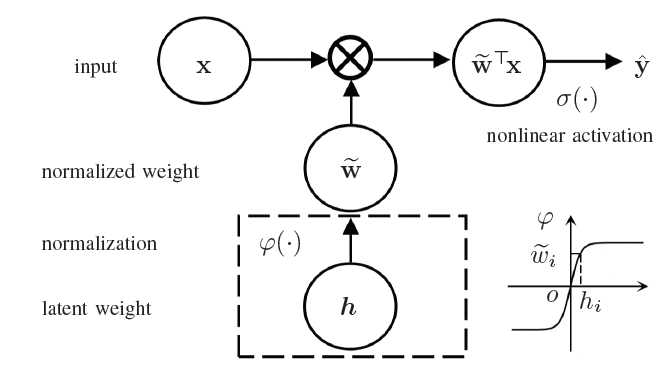

Fig 2. Example of a single-layer quantized neural network with a latent weight vector. It is adopted in FedVote neural networks.

Results

Fig 3. Test accuracy versus communication round for different communication-efficient methods on (a) cross-device non-i.i.d. FEMNIST with 300 clients, (b) cross-silo non-i.i.d. CIFAR-10 with 31 clients, and (c) cross-device non-i.i.d. CIFAR-10 with 100 clients. FedVote outperforms other communication-efficient methods by achieving the highest accuracy given the communication round.

Citation

title={Federated learning via plurality vote},

author={Yue, Kai and Jin, Richeng and Wong, Chau-Wai and Dai, Huaiyu},

journal={IEEE Transactions on Neural Networks and Learning Systems},

year={2022},

publisher={IEEE}

}